Can AI Agents Win a Modeling Challenge? A Replicable Experiment

To get the most out of AI agents, we need to remove human bottlenecks and increase leverage.

To get the most out of AI agents, we need to remove human bottlenecks and increase leverage.

If you want to jump straight to the implementation, the full code is here: Github.

What Was Really Being Tested

My company recently held a modeling competition in which participants were allowed to use only AI tools, without writing any code themselves. The objective was straightforward: maximize the AUC of a supervised learning task.

In tabular supervised learning, the practical toolkit is already quite mature. Gradient boosting models remain the dominant high-performance baseline, and in real-world applications the largest gains often come not from changing the model class, but from accumulating more data, engineering better features, and defining targets that better reflect business objectives. Model fine-tuning can help at the margin, but it rarely produces step-change improvements. Even in sequential modeling, much of the value can be understood as learning richer representations of the underlying behavior and structure.

For that reason, the most interesting question was not whether an LLM could write code to implement ideas that human practitioners had already provided. If humans specify the feature candidates, the modeling tricks, and the overall direction, then the exercise becomes largely a test of coding and execution. That is useful, but not especially interesting. A more meaningful test is whether an LLM can autonomously discover promising ideas for itself: what features to construct, which techniques are worth trying, how to interpret intermediate results, and how to iterate toward better performance under a clear objective.

Seen from this perspective, the purpose of the competition, in my own opinion, was not to discover a fundamentally new classification algorithm nor implementing solutions based on human insights. It was to test whether an LLM could independently reproduce the practical workflow behind strong credit risk modeling: generating hypotheses, engineering useful features, running experiments, learning from feedback, and improving performance through iteration. Put differently, if the playbook used by experienced practitioners is only partially specified, how much of it can an LLM recover on its own?

This also made the exercise a test of instruction design. Beyond model performance, it offered a way to understand how tasks should be framed so that an LLM can explore the solution space productively rather than simply execute a predefined recipe.

The Experiment Setup

At first glance, the objective may seem clear enough: give the LLM a target metric and ask it to iterate on its own. But things work more smoothly in human teams because people already share a large amount of tacit context: what counts as a valid experiment, what shortcuts are unacceptable, how performance should be evaluated, and when a result is worth keeping. For an LLM, many of these assumptions have to be made explicit.

To make the exercise meaningful, we needed to specify a set of operating rules that human teams would often leave implicit.

The experimental setup was not fully specified from the start; as I observed the agent’s behavior, I iteratively refined and steered it. For example, in the later stages, I found that the acceptance threshold had become too strict.

Scope. We had to define what the agent was allowed to modify, what it was not allowed to touch, and what kinds of actions were permitted or prohibited.

Integrity. The agent could use only data available before the application date. It also had to check for leakage before proceeding, especially when a single feature appeared to perform suspiciously well.

Evaluation. The evaluation methodology had to be fixed in advance. Otherwise, the LLM could improve reported scores simply by changing the validation setup rather than improving the model itself.

Promotion criteria. We needed to define what magnitude of improvement was sufficient for a new approach to be accepted and carried forward.

Logging. Like humans, LLMs do not naturally produce good documentation unless asked to do so. To make their work inspectable—and to give the agent a usable record of its own progress—we had to explicitly require logging.

Resource constraints. The agent needed rules for efficient experimentation: cache generated features for reuse, avoid recomputing unnecessarily, explore ideas in parallel where possible, and operate within limits on the number and scale of experiments.

Simplicity. Some human judgment still had to be encoded into the setup. In general, we preferred simpler models when they delivered performance comparable to more complex alternatives.

Stopping criteria. We also had to define when the agent should stop iterating, rather than continuing to search indefinitely for marginal gains.

These rules were not just administrative details. They were part of the experiment itself. If the goal was to test whether an LLM could behave like a disciplined modeler, then the environment had to specify the constraints under which disciplined modeling takes place.

You can find the full instruction to the LLM here.

How the LLM Iterated

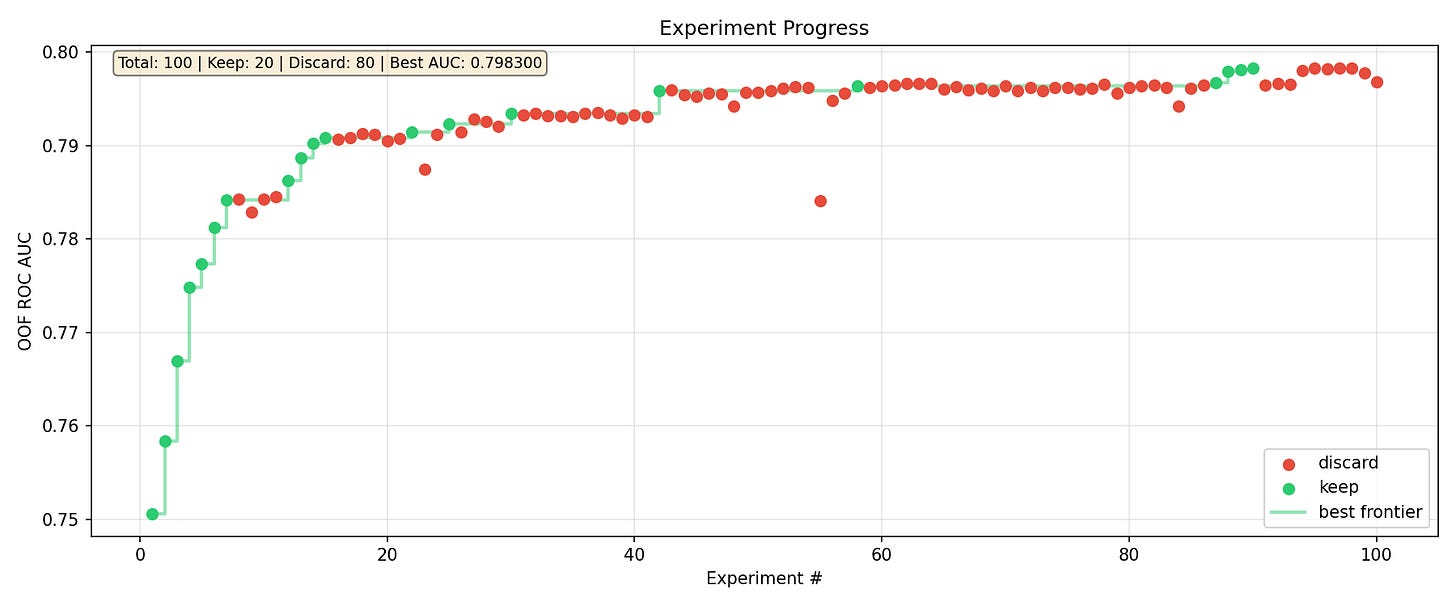

The LLM spent around 48 hours to reach the final result. In the first 24 hours, I did not prompt the LLM anything and it reached 0.793424 AUC at round 31. The, the LLM stucked for consecutive 12 rounds. I prompted the LLM to leverage websearch to search for ideas, although I already stated that in the original prompt and it preferred relying on its internal knowledge.

Thoughts

With clearly defined objectives and boundaries, an LLM can iterate on its own and uncover most of the early low-hanging fruit much faster than a human can. However, more distant ideas—such as KNN-based approaches or sequential modeling, which require a more creative leap—seem harder for it to discover. That said, the LLM only spent two days exploring the solution space. Without internet access, it is not obvious that a human could have found those ideas within the same time frame either. My guess is that the top-performing team spent far more than two days to achieve its breakthrough, and likely involved multiple team members.

What stands out most is how much cheaper it becomes to try new ideas. The cost of experimentation drops sharply in terms of both time and human effort. With its coding ability, the LLM can translate modeling ideas into working code extremely quickly. Generating roughly 300 additional features, running hyperparameter tuning, stacking multiple models, and building sequential representations of the data—all within two days—would be expensive for a human team. To do the same amount of work, we might need five to ten experienced practitioners. In my case, I did not even fully use the token budget of Claude’s USD 100 max plan.

Will humans lose their jobs? Partly, yes. LLMs will increasingly replace a large share of the coding, pipeline construction, and some feature engineering work. But they still need humans to provide direction. The human role will shift toward defining the scope, constraints, and objectives of the task, and then validating the outputs. At least for now, humans also seem to have better taste—in the sense of searching the solution space more efficiently and recognizing which directions are truly promising. We sometimes hear stories of LLMs surfacing obscure old papers that solve a problem outright, which suggests that this advantage may not always hold. But on average, I still think humans with domain knowledge retain an edge.

Will that remain true as we use LLMs more and progressively transfer domain knowledge into them? I do not know. If forced to give a rough estimate, I would guess that within five to ten years, LLMs may surpass humans even in the discovery of previously unknown solutions.

What seems much clearer is that, over the next five years, human-plus-AI will dominate human-alone workflows. The productivity gain is easily an order of magnitude for many existing tasks, and in some cases effectively unbounded because AI enables work that would not have been attempted otherwise. If you are not using AI every day to learn new things, you are likely falling behind. If you are not using it to accelerate coding-related work, you are almost certainly moving much more slowly. And if you are not using it to help generate new ideas and solutions, you may already be at a meaningful disadvantage. Once these effects compound, the gap between human-only and human-plus-AI workflows becomes very large.

Next Step

If the current limitation is the LLM’s “taste,” then the next frontier is not just better models, but better ways of searching the solution space. The key challenge is to help the LLM explore more intelligently, so that it can identify promising directions earlier instead of relying mainly on broad trial and error. Early thoughts including the use of tree-of-thoughts and Monte Carlo Tree Search (MCTS) which I will try to implement in next week.

I also need to better maximize the available token budget. During this project, the agent never came close to exhausting the token allowance of the Claude Max ($100/month) plan. In practice, a considerable amount of time was spent waiting for model runs to finish while the agent was otherwise idle, suggesting that I did not make full use of the available capacity.